Embedding artificial intelligence into operational policing

Steve Ainsworth, executive director of Public Safety at NEC Software Solutions UK, believes that transparency is key when it comes to embedding artificial intelligence into operational policing.

We live in a world where it is nigh on impossible to avoid leaving a digital footprint. Every day 500,000 tweets and close to six billion Google searches are made, in addition to the 306.4 billion emails sent. And even if you do eschew wearable tech, Amazon Dot or Ring doorbell, your mobile phone, PlayStation, or Oyster card will fill in any gaps. It’s hard to imagine then, a crime where the sheer volume of digital evidence now available does not significantly increase the lines of enquiry during an investigation. Forces, however, are finding it hard to ride the wave of this tsunami of evidence and AI can help identify patterns and trends at speed.

Algorithms can also help police identify which neighbourhoods require extra resources on a Friday night or highlight those who are at high risk of causing trouble at a football match.

However, the use of AI continues to divide opinion. Whilst other sectors like healthcare, housing, retail, and finance have been quick to adopt new technologies to help improve performance and meet demand, understandably policing has taken a more cautious approach.

It is entirely reasonable to expect wariness when it comes to incorporating AI in decisions involving law enforcement. The rationale behind this is twofold; public concern that bias within data sets could entrench pre-existing discrimination by directing officers to patrol over policed areas, and internal concern that using AI could marginalise or replace police officers expertise and professional judgement.

Improving understanding of what AI can bring to the table and transparency around its usage, and the pivotal role human decision-making plays, is vital to secure wider buy-in so its many positives can be embraced.

The policing challenge

Maintaining public confidence is one of the biggest challenges currently facing policing. Policing by consent remains as legitimate today as it was when enshrined as one of the nine Peelian Principles of Policing. Therefore, creating an ethical, legal, and regulatory framework around the use of AI in policing is essential.

Legislation around its deployment is under discussion which will add clarity, but in the meantime, AI shows real promise in adding value to decision making in policing. The proviso being officers are upskilled with the necessary skills and expertise to interrogate and interpret the data accurately and effectively, combined with their professional judgement so its deployment can be proportionate and justified. This will help build greater understanding and acceptance of its deployment both inside and outside of the police service.

This is a fast-moving debate, where quite rightly the ethical use of this powerful technology is put under scrutiny, but ultimately society will need to reach some level of agreement in its application. Police forces are under continual pressure to combat increasingly complex crimes with limited resources and where the pattern of demand is constantly changing. Violence against women and girls looks set to be added to the strategic policing requirement and machine learning can help officers identify patterns and trends to make connections that can allow them to respond quicker and more accurately.

Fluid and flexible

Policing is about dealing with complexity, ambiguity, and inconsistency. In an unpredictable environment AI can provide forces with hour-by-hour intelligence to allow them to intervene earlier. It can make a significant contribution to improving service delivery and enabling local policing to be even more effective by sifting through vast quantities of data and evidence. As a result of the information pulled out a recommended action plan can be created by the AI to help support decision-making, which the officer can review and implement or choose not to, depending on their judgement and expertise.

More in-depth insight gives officers greater knowledge about their area, from community concerns to concentration of crimes, enabling them to respond with the right tactics.

Some trends are easier to manually spot than others depending on the scale and volume of the information available. Sometimes though they will not be picked up by officers quickly, as a crime could be logged or classified differently or spread out over several locations across a long period of time.

AI can help inform crime investigations by processing large quantities of data on past cases for officers to review. The technology is capable of spotting patterns, the circumstances around them and their quality to determine the likelihood of solving a case. By making connections between these factors, the technology can highlight similarities from a vast bank of previous cases, enabling officers to make their own informed decision based on a richer picture.

Data quality

Focussed deterrence depends on good quality data and one thing the police service is not short of is data.

A successful approach to evidence-based policing in supporting investigations relies heavily on the data available about a case. Although the quantity of data has never been an issue what is vital is that it’s good-quality data and there are the right tools to sift the relevant information quickly and meticulously, which is where AI comes in.

To ensure high-quality data feeds into AI, there needs to be a reliable and transparent way of capturing that data. However, data capture can be a challenge in a busy control room or when police officers are having to act on their feet at the scene of a crime.

In the fast-moving and complex environment of policing, there is scope for technology to capture and store information at scale. For example, footage from the body worn camera of officers attending a routine burglary or an incident of affray can provide valuable data on whether a crime should be investigated. This information can be processed by AI and reviewed by officers later to make more informed decisions on the best way to proceed.

The heavy, but necessary admin side of policing can contribute to officer burnout as well as reducing their capacity to carry out mission critical tasks. AI can then help police by processing and sifting this data to find relevant content that can be used to help solve a crime.

The importance of transparency

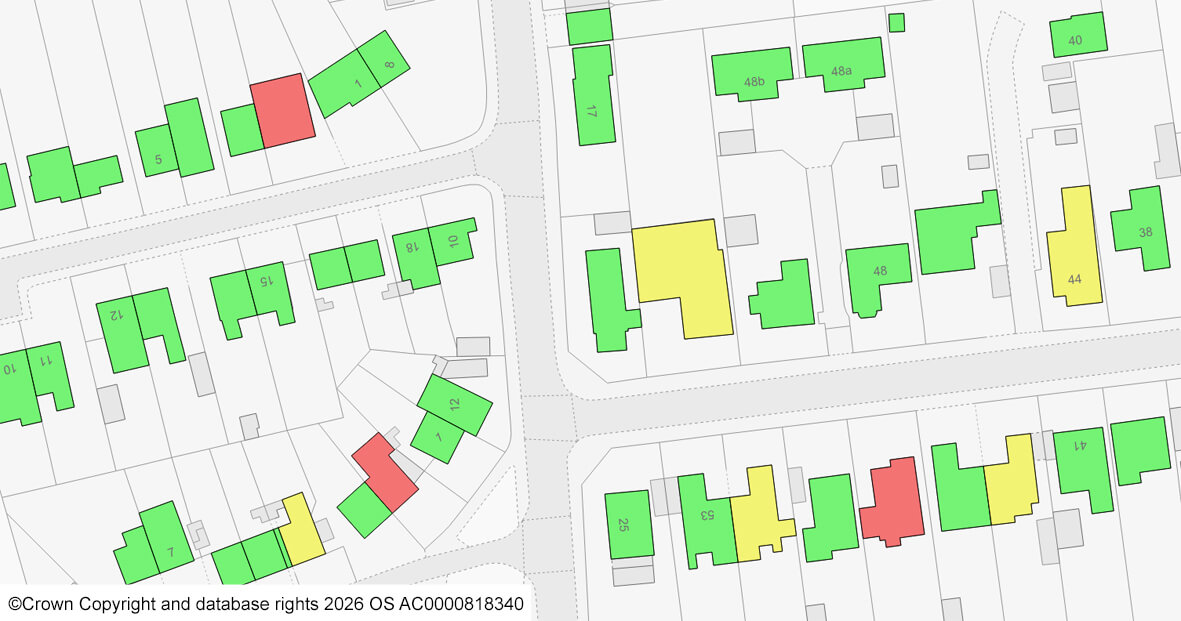

Using AI more widely in policing would be a gamechanger in targeting resources more effectively by spotting shifting patterns more easily. Officers can then take an informed decision as to the appropriate response. It will also provide a broader context to help identify why there is a particular crime pattern occurring within an area. For example, is an increase in anti-social behaviour attributable to more drug dealing and taking in the area and an indication of County Lines activity.

To avoid AI being perceived as a ‘black box’ process in which data is fed in and results are churned out, it is important to make the technology explainable. This helps to demystify AI by looking at all the features which contribute to achieving a result.

There should be an awareness of the data streams which are used in machine learning technology regarding what is included and what is left out. Greater transparency will help to promote understanding of how the algorithms identify patterns and make predictions and allows for systems to be continually refined.

Some encouraging advances are taking place which build greater transparency into the use of technology and how it can help to inform decisions about offender risk. One of these is ALGO-CARE, developed by Sheffield Hallam University, which is a model for algorithmic accountability and has been adopted by the NPCC as the national standard across the UK.

Using a decision-making framework like ALGO-CARE, will help bring greater reassurance around the deployment of AI in policing as it creates more rigour and transparency around why the technology is being deployed. For instance, does the algorithm match the policing aim, what other decision-making mechanisms are in place to add objectivity to any decisions made because of the algorithm and what additional oversight can be taken to identify bias.

Modern policing

Innovation in AI and machine learning is evolving at pace and there are many ways this emerging technology can support decision-making in policing. Using AI systems in policing will not eliminate humans from the equation, but it will instead give officers the vital information they need to make informed decisions to create a safer society.

Although it is no magic bullet, officers could spend their time more effectively by tackling what the data is showing. For example, why are violent crimes more difficult to solve in some areas than others? It could be there is less trust in policing within a particular community, so giving officers more time to establish greater trust could have a transformational effect on the investigation of crimes in that area going forward.

Policing is not a passive participant in this evolving conversation, forces generate vast quantities of the data needed and within an agreed ethical and regulatory framework can build a comprehensive evidence base that is based on scientific principles to give validity to its deployment.

Greater use of AI in policing is not without its challenges, but more transparency and fuller explanation around how it works alongside officers, and not instead of, will help foster a more widespread acceptance of this modern policing tool.

Find out more about AI technology in policing

As AI technology becomes more widely used, we here at NEC are taking a deeper look into it’s opportunities in policing.

You can find out more in our how AI technology can provide insight to support officers in our article How artificial intelligence can enhance human decision-making in policing.

If you’re interested in how our operational police software can help your force, contact our team today.